We set up the new lamps. They seem to provide the required amount of lighting, and certainly don't interfere with the projection. It seems possible, however, that there is more light coming in from the window than there was last week, as the cameras seemed to be picking up motion even with the lamps off (but not with the lamps off and the window closed). The cameras did pick up motion with the lamps on and the window closed, so the lamps work as desired.

The readme file for the Pd patches: README

Put stickers on the keyboard to mark the C minor pentatonic. It now looks like a keyboard from an elementary school music class. This room is becoming a juxtaposition of all sorts of contrary things, what with lace and keyboard stickers and lamps.

We all spent yesterday afternoon revising things and trying to figure out how to use MIDI output on a Mac. This synthesizer looked like it might be useful, and seemed to work. However, after taking input from the floor sensors for a while (maybe 30 seconds?) it stops outputting any sound at all. Evidently the floor sensors are the equivalent of "Hammering a lot of keys or holding a sustain pedal down too long," as this is known to cause such problems. It seems to be implied that it might not happen with all instruments, but it doesn't specify which ones do and don't have problems. Although I suppose it could equally well be read that all instruments have problems, but of varying severity. We may now be resorting to audio output.

Added a few comments/instructions to the installation patches to better explain things. We set up the installation and wandered through to see how it looked/sounded.

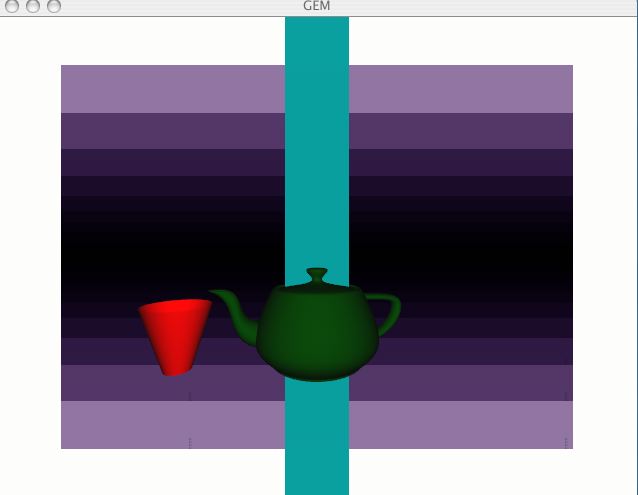

Setting up the installation to have people walk through it ...

It was interesting to see people thinking of this as art ... I hadn't been, really, I think I was thinking of it as sort of ... a thing to do with all the programming and suchlike. Maybe a project more than an artwork. And then it sort of became something ... artsy, when other people were talking about it. Which was sort of odd.

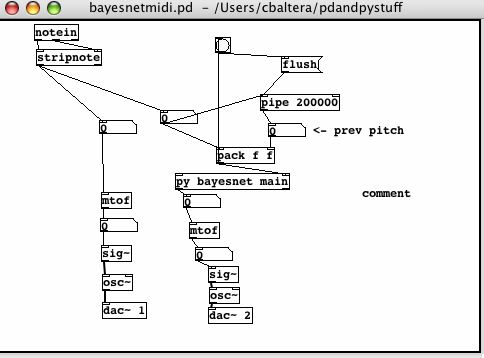

Playing with wavetables. I've added them to the Bayes net and graphics patch, and to the HMM chord progression patch. The one I currently have in the Bayes net patch seems sort of growly. I think I will change it and see if I can find a nicer one.

I'm wondering if the growliness is caused by the number of notes playing, if the cumulative amplitude has gotten too high or something. Also, the Bayes net patch seems to harmonize with itself or the chords from the HMM patch more now that the wavetables have been added.

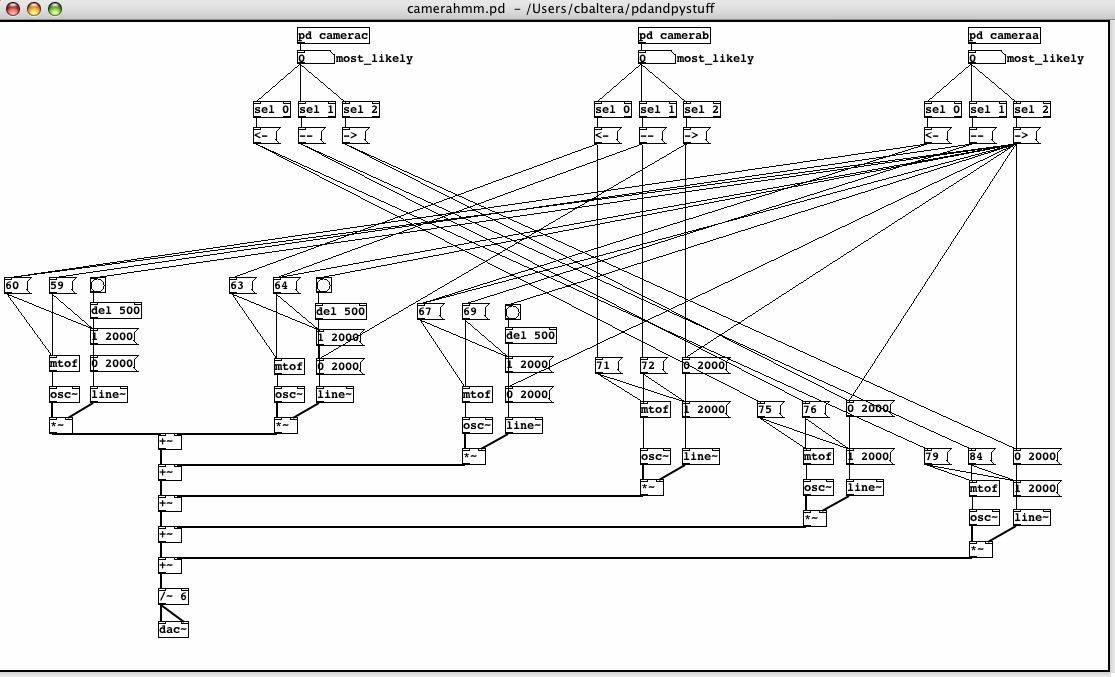

The sound does seem to be cleaner now that I've divided the signal by 7 instead of 5 before putting it through the dac~.

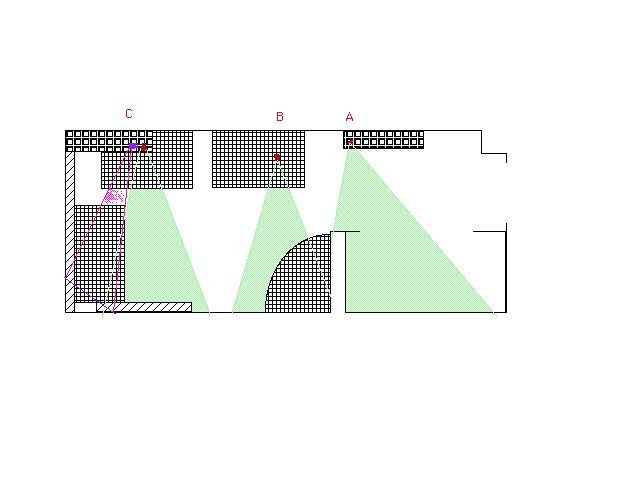

There was something here but it vanished. I think it was about editing the camera patches a little bit and retraining the HMMs. Also, there was a diagram of the approximate layout of the cameras and the projector. However, since the diagram was made before the cameras were put in their final locations, camera B should be further to the left, and camera A further to the right, and the picture is of course not to scale. The cameras are depicted in red and the projector is depicted in purple.

There wasn't anything here because things were being updated or something. However, we did manage to hang the lace from the ceiling by tacking it into the sides of two tiles, and we projected onto it. Worked on the summary/write-up a bit.

Attempting to reconstruct Tuesday's log since it vanished, and Wednesday's since it never existed in the first place..

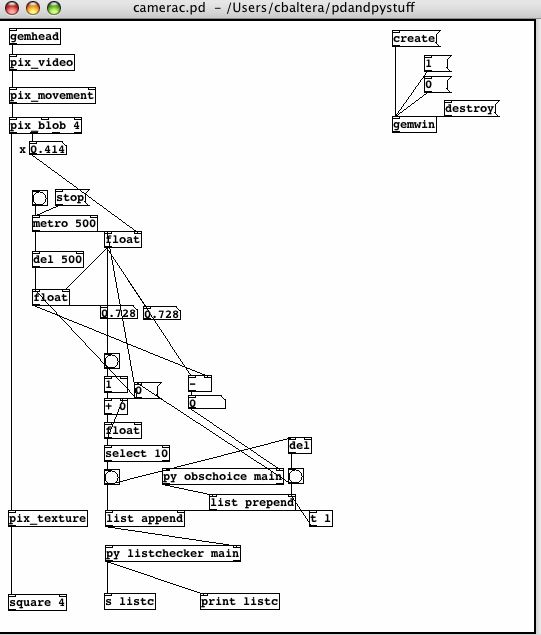

So ... what I did on Tuesday. I deleted a couple of connections from each of the camera patches, since they weren't doing anything useful (actually, I think they may have been exacerbating the artificiality of the training sequences, so it's just as well they're gone now). I recorded new training sequences for camera C, then I trained the camera C HMMs. I think they work better now than they did with the old training sequences, or at least they seem to. Taped down camera A, marked the locations of cameras A and C and the projector. Recorded new training sequences for cameras A and B, and trained their HMMs. Roughly outlined the summary.

Wednesday ... Started writing the summary, in an extremely unpolished fashion. Put up the lace. Well, I moved the table so Sara could put up the lace.

And today ... I reconstructed the above paragraphs. We set up the whole installation to run and tested it to see how it worked/sounded. There seems to be a difficulty with lighting. The projection of the teapot onto the lace is much more striking with the lights in the room turned off, but the cameras do not seem to pick up on motion in the darkness, I guess due to lack of contrast. Judy is going to look for floor and/or table lamps to use instead of the overhead lights, so that there will be sufficient illumination to permit the cameras to detect movement, but it will be localized enough not to interfere with the projection. It was also decided that the chords should cut off rather than playing indefinitely, and that they would sound better an octave lower and somewhat louder. The camerahmm.pd patch was modified accordingly. The teapot patch was also edited to use the C minor pentatonic scale to produce harmony rather than the complete C major or minor scale, as Judy thought the complete scale made the sound too busy.

The entirety of the installation seems to be the floor sensors controlling sound and graphics, the HMMs controlling sound, and the Bayes net controlling sound and graphics.

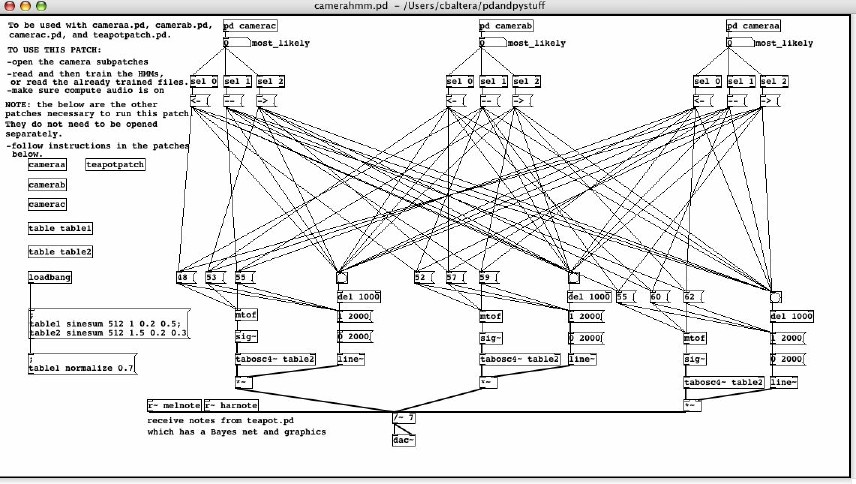

I decided there were too many windows open, but they were all necessary since none of them were subpatches. Thus, I modified camerahmm.pd so that it calls the other necessary patches as abstractions (I think that's the term), so it is no longer necessary to leave any patches open other than camerahmm.pd.

Updated versions of camerahmm.pd and the teapot patch:

Made a general README file that is basically a sentence or two about each patch and python program in the folder. I think it's as complete as it's going to get, unless I suddenly become inspired to add to it.

Hooked up the cameras and the HMMs and collected 30 sequences of 9 observations each on which to train each HMM. So it is now possible to have chords play and change as people are in the room, although I do not think the training of the HMMs is refined enough that the chords play exactly as they are ideally supposed to. There's still the problem with the training sequences being artificial in the way they start, due to jumps in between the end of one and the beginning of the next, leading to initial probabilities that are not necessarily representative of those which would be observed in any real scenario. I also think the order of the cameras may be different than what I had been envisioning, so the different notes of the chord should probably be attached to different cameras than those they are currently attached to.

I've been running some more tests with the python and Pd HMMs. Here are the observation probabilities from last week's tests.

| observation | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

|---|---|---|---|---|---|---|---|---|---|

| state | |||||||||

| 0 | 20% | 20% | 0 | 30% | 30% | 0 | 0 | 0 | |

| 1 | 0 | 0 | 70% | 10% | 0 | 20% | 0 | 0 | |

| 2 | 0 | 0 | 20% | 0 | 60% | 0 | 0 | 20% | |

| 3 | 0 | 0 | 0 | 0 | 0 | 0 | 40% | 60% |

| observation | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

|---|---|---|---|---|---|---|---|---|---|

| state | |||||||||

| 0 | 19% | 20% | 0 | 17% | 44% | 0 | 0 | 0 | |

| 1 | 0 | 0 | 53% | 23% | 0 | 25% | 0 | 0 | |

| 2 | 0 | 0 | 43% | 0 | 38% | 0 | 0 | 18% | |

| 3 | 0 | 0 | 0 | 0 | 0 | 0 | 40% | 60% |

| observation | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

|---|---|---|---|---|---|---|---|---|---|

| state | |||||||||

| 0 | .9% | 1.5% | 8.5% | 4% | 9% | 17.4% | 24% | 34% | |

| 1 | 4% | 5% | 7% | 20% | 53% | 5% | .6% | 2.9% | |

| 2 | .8% | 2% | 73% | 11% | 2% | 4% | 3% | 3% | |

| 3 | 20% | 16% | 6% | 4% | 13% | .5% | 6% | 36% |

| observation | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

|---|---|---|---|---|---|---|---|---|---|

| state | |||||||||

| 0 | 19.9% | 20.8% | 0 | 19.9% | 39.4% | 0 | 0 | 0 | |

| 1 | 0 | 0 | 51.2% | 21.7% | 0 | 27% | 0 | 0 | |

| 2 | 0 | 0 | 45.3% | 39.5% | 0 | 0 | 0 | 15.2% | |

| 3 | 0 | 0 | 0 | 0 | 0 | 0 | 41.3% | 58.7% |

| observation | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

|---|---|---|---|---|---|---|---|---|---|

| state | |||||||||

| 0 | .7% | .8% | 9.2% | 5.6% | 11.1% | 20.8% | 21.6% | 30% | |

| 1 | 4.3% | 5.1% | 8.5% | 18.9% | 52.6% | 6.1% | 1.3% | 30% | |

| 2 | 1.7% | 2.3% | 70.1% | 12.4% | 2.1% | 4.6% | 2.7% | 4.1% | |

| 3 | 16.1% | 15.7% | 6% | 6.3% | 13.9% | .8% | 6.8% | 34.5% |

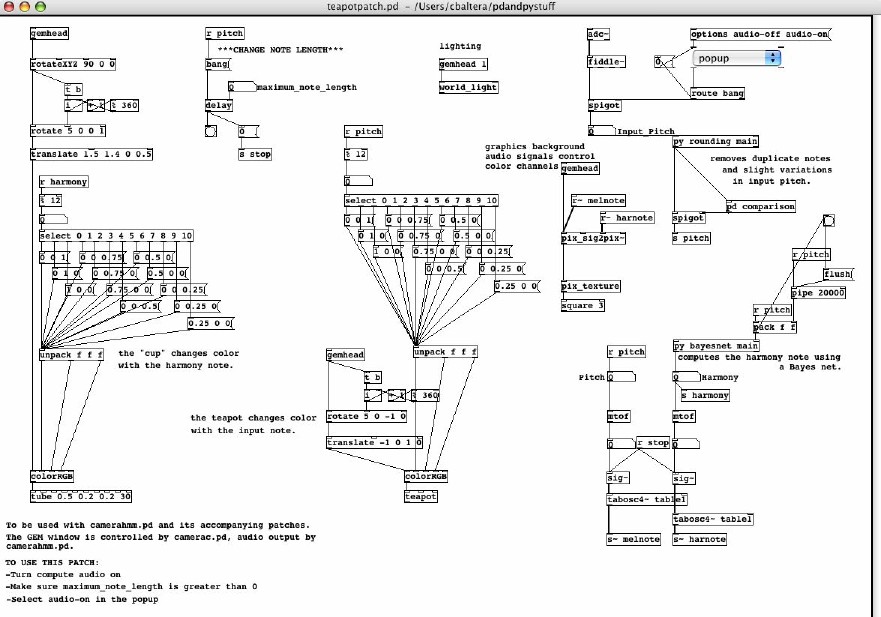

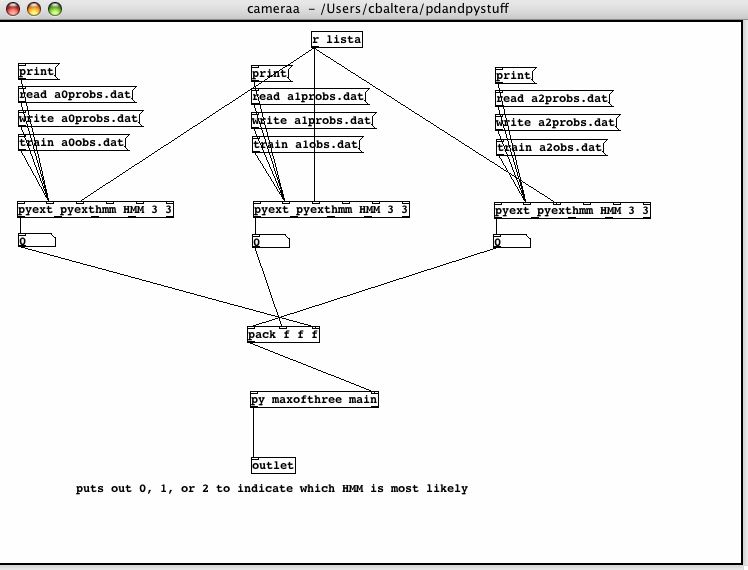

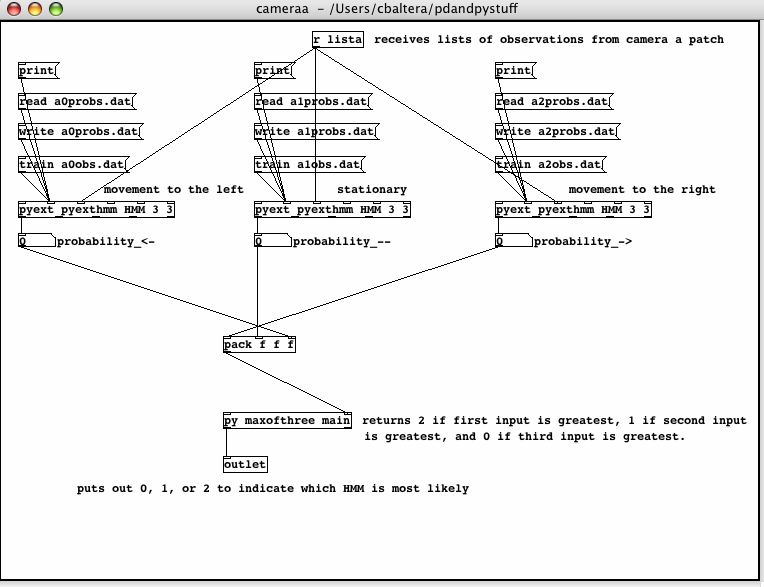

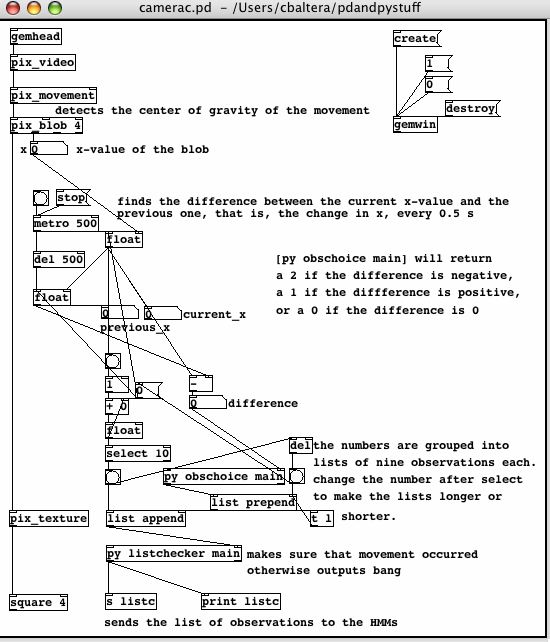

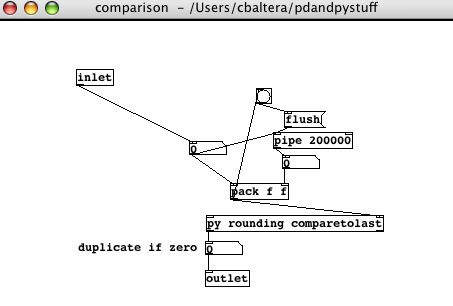

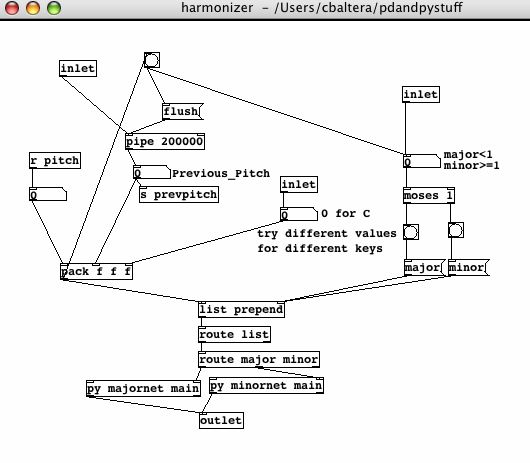

Here are some pictures of the camera and HMM patches:

And here are commented versions:

I've been putting comments on various patches and also coming up with a version of the Bayes net and graphics patch that will run with the HMM chord progression patches running at the same time. pix_sig2pix~ seems to be very useful in this situation for making the picture rather more interesting. I'm using it to texture the background, so any time there are notes coming in, the background starts changing color.

About getting training sequences without artificial beginnings ... I'm not really sure how to do that with the set up I have. I'm wondering if it would be better to just write the trained probabilities to a file, and then go in and change the initial probabilities to just be even for all three of a camera's HMMs, since I don't know how to reliably guarantee that they're trained in a way that will account for the initial probabilities in a real situation. I suppose one could imitate a real situation while training, but that would require keeping track of which list went with which action, as opposed to recording all the lists for one action before going on to another.

Judy brought the speakers for the keyboard, so I've been playing with chords on the keyboard (all right, I admit that I've been playing with the different settings on the keyboard too). I guess I'm now wondering if it might be better to have each motion play a different chord, rather than piling up more notes in the same chord ... it's really hard to come up with a lot of different notes that sound good all together. I mean, probably if I had some underlying pattern, or duplicated pitches, or something, it would be possible, but ...

I've been experimenting with different combinations of chords in the camerahmm.pd patch. It still seems to be nontrivial to come up with nine (well, eight, since moving right from camera a turns the sound off) different chords that sound good in almost any order. Oh well. Something will turn up.

The camerahmm patch is now set up to play I, IV, and V chords in the key of C. These three chords are given in various combinations as chord progressions to go with both major and minor pentatonic scales in the pages about chord progressions to which Judy has linked.

More data production and number crunching. Wrote a python program to eliminate some of the copy/pasting. Still trying to test the HMM.

And again ... I put the while loop back in the python HMM, so it now trains until convergence. I think I'll let it run on the current set-up, and then compare/analyze/look at all the numbers it has produced.

Looked at outputting chords again ... It turns out that adding signals and then dividing by the original number of signals works quite nicely. I guess I was making it more complicated than it needed to be, before.

To summarize all those tests of the python HMM training code ... The test sequences were produced by the Pd HMM, which had four states and eight possible observations. I set up a patch which stored a list of 29 observations and then sent this list to the pyexthmm object's training function. A metro object allowed this to run repeatedly without human intervention. After training on a list, the probability tables defining the HMM were printed to the Pd console and the tables were reset to their initial values. After allowing this set-up to run for a while, I copied the console printout into a file, and then separated the various probability tables into their own files, and found averages. For all tests the transition probabilities in the Pd HMM were as follows:

| state | 0 | 1 | 2 | 3 |

|---|---|---|---|---|

| 0 | 10% | 70% | 10% | 10% |

| 1 | 50% | 10% | 30% | 10% |

| 2 | 10% | 50% | 20% | 20% |

| 3 | 20% | 10% | 30% | 40% |

For the first test each state had two possible outputs, and no two states shared an output. The HMM was trained on each sequence for ten iterations. 168 sequences were tested; 165 converged at iteration 1, and the remaining 4 returned a ZeroDivisionError, apparently caused by a training sequence that was extremely unlikely with the original probabilities. The average values in the table of observation probabilities were extremely close to those in the Pd HMM that produced the training sequences, as were the values in the state transition table.

The last four tests used observation sequences produced by the same version of the Pd HMM. State 0 had four possible observations, state 1 three, state 2 three, and state 3 two. An observation could be produced by more than one state. For the first two of these tests, the HMM trained ten iterations on each sequence. On the first of these, the initial observation probabilities were such that the probability of those observations which could not be produced by a given state in the Pd HMM was set to 0; that is, it was known which observations were possible at each state, but not their actual probabilities. The second of these tests did not have this information: it was assumed that any state could produce any observation.

| state | 0 | 1 | 2 | 3 |

|---|---|---|---|---|

| 0 | 4% | 54% | 29% | 14% |

| 1 | 43% | 14% | 34% | 10% |

| 2 | 28% | 41% | 11% | 19% |

| 3 | 30% | 14% | 30% | 27% |

| state | 0 | 1 | 2 | 3 |

|---|---|---|---|---|

| 0 | 4% | 26% | 27% | 44% |

| 1 | 10% | 16% | 71% | 2% |

| 2 | 16% | 60% | 6% | 18% |

| 3 | 46% | 9% | 30% | 15% |

There were 287 sequences in the first of these tests, and 207 in the second. The first test, where the possible outputs of each state were known, produced probabilities closer to those in the Pd HMM that produced the sequences. In fact, the observation probabilities especially in the second test did not seem to be similar to those in the Pd HMM, being rather more like the arbitrary initial values in the HMM being trained.

Similar results were obtained from the next two tests, which were the same as the previous two except that the addition of a while loop to the training function ensured that it iterated until convergence. For 295 samples with the possible observations for each state known, the average convergence was after 132 iterations. For 216 samples with the possible observations unknown the average convergence was after 97 iterations.

| state | 0 | 1 | 2 | 3 |

|---|---|---|---|---|

| 0 | 5% | 49% | 31% | 15% |

| 1 | 43% | 15% | 32% | 10% |

| 2 | 30% | 39% | 9% | 21% |

| 3 | 28% | 12% | 37% | 26% |

| state | 0 | 1 | 2 | 3 |

|---|---|---|---|---|

| 0 | 5% | 25% | 19% | 50% |

| 1 | 12% | 12% | 71% | 4% |

| 2 | 16% | 63% | 6% | 17% |

| 3 | 50% | 9% | 29% | 12% |

Worked on putting together the chords, HMMs, and some rudimentary motion detection. I have a patch that will (eventually) take input from all three cameras and run it through HMMs to determine whether the motion is primarily to the left, the right, or neither. Depending on which camera sees which direction of movement, notes will be added to, subtracted from, or modified in the chord. The HMMs have three states and three observations each, the possible observations being positive change in x-value of the blob, negative change in x-value of the blob, and no change in x-value of the blob, measured over .5 second intervals. I'm not entirely sure what the states represent. Possibly whether the real life motion is in fact positive, negative, or zero. The HMMs are trained on a file of observed sequences of nine observations each, although this number could easily be changed in future.

Actually testing this with more than one camera is going to have to wait for Sara to figure out how to make the two cameras on her computer cooperate, so that she can send me their data.

I think I need to find a better way to record the sequences to be used for training. As I've been doing it, they all end up starting with the same observation because of the jump between the end of one motion and the beginning of the next repetition of the same motion. This leads to problems in the identification of motions because they do not necessarily start with such a jump, as they can come in different orders in a real situation.

Judy got the laptop project to work, so all it needs now is a way to arrange the speakers, laptop, and microphone so that they are all in safe and suitable positions.

I checked through the changes in the HMM from Thursday, and they seem to make sense. I added a loop so that it can train on several sequences of observations at once ... The problem seems to be that if any of the sequences does not include all of the possible observations at least once, the probabilities for the non-included observation appearing get set to zero for all states, and then all of the other training sequences get declared impossible. Maybe I should put a catch ZeroDivisionError that prints out "Impossible Sequence" or something...

That wouldn't really be a solution, though, it would just make the error prettier. I'm wondering about the adding the probabilities from the various sequences each iteration, and then normalizing ... I just don't know if I want to get into storing all that if I don't have to ... Probably I have to ...

The normalizing is added ... The weird thing that now seems to be happening is that the probability of the first state being zero is almost one, and the probability of transitioning to any state other than zero from state zero is also almost one, although not quite as large. This leads to a trained HMM returning an array of zeros to Viterbi almost always. It just seems to me that the computer shouldn't assume that the first state is always going to be zero, and maybe it's basing its numbers on something, but I don't know what. It seems that the program somehow favors state zero ...

By starting with a different set of initial probabilities, I got it to favor state one instead ... although the probabilities are closer to .7 than .999 as they were for state zero before. I guess I should probably try to find some real data to check it on, to see if these numbers actually make sense, instead of coming up with arbitrary strings of "observations" from my head.

I'm still not sure that it is actually doing what it is supposed to, but there doesn't really seem to be an obvious way to test it, unless I figure out how to run that C++ implementation that Judy linked to and put in the same numbers to both of them ... I may try that later.

Yesterday afternoon I spent some time looking at Gem objects in help. Really neat things I found: pix_rds, which makes "random dot stereograms", chord, which "tries to detect chords" and then identify them, and pix_pix2sig~ and pix_sig2pix~, which convert between images and audio signals.

The laptop project has been set up and is running.

Started trying to put hmm.py into pyext format, so that it can be used in Pd.

Continuing to make the python HMM pyext-compatible. I'm having a bit of trouble inputting arrays/2D lists from Pd. It seems like there is probably a better way to organize the input/output/etc. than the one I'm using. I think I may experiment.

I've made a patch that sort of plays chords, without having them sound too awful, although there is still some buzzing. It may be less noticeable on higher notes. I also put in a really repetitive web of conditionals to get the output notes to turn off at the same time as the MIDI input does. I think I may try to add that to one of the Bayes Net patches. Anyway, I didn't really proceed in any kind of precise or scientific fashion to put together the chord playing thing, just sort of looked at a bunch of help patches and stuck stuff together until it sounded tolerable, so it almost certainly could be improved a lot.

Well, input and output of probabilities to and from a file seems to be working, although it took far longer than it should have to make it work. The HMM pyext object currently has three methods, all activated by messages to the second inlet. These are print, read, and write. Print prints the tables of probabilities to the Pd console, read reads tables of probabilities from a file, and write writes the current values of the probabilities to a file. Read and write are currently set up to use the same file, and write formats its output accordingly.

No, file input and output is not working. It's got the same error that I was having with input from the Pd window yesterday: the later lines of the 2D array overwrite the earlier lines, so all the rows of probabilities end up being the same. I thought it was a problem with variables or something, and I also thought that it was sort of working at one point, but apparently I was misreading the printout. Maybe I'll try the code in plain python and see if anything becomes clearer.

Well, the same thing is happening in just python, so that means it should be findable and fixable. Now I just need to find it.

It's looping over all the rows of the array, even when it's only supposed to loop over some of the rows. Now to change that ... ???

The test fileThe problem seems to be the way in which the rows of the array were initialized. They're all apparently initialized to the same list of zeroes, which is then getting modified when the new values are read in. Time to add something to make the rows different lists of zeros.

So after all it was the problem I thought it was originally, just in a different place. And it's fixed now.

I set up the Pd HMM to output series of 29 observations, and trained the python HMM on 168 of those, printing the results after each training. Then I started playing around with the numbers produced, to see if anything interesting or unexpected showed up. There was much copying and pasting involved.

Sara went and got the projector and the other camera this morning. We set up the projector and hung the lace on the bulletin board, since we couldn't reach the ceiling to hang it in the corner.

Continuing with the python HMM. I think I'm doing something backwards or inside out, so I'm going to have to think it through some more before actually getting any useful functions done.

I think I now have the function for the forward algorithm mostly worked out, but the indices are a bit mixed up. I guess I'll try to test it once anyway, and then fight with the indices and suchlike.

The forward algorithm seems to be working correctly!

I've been attempting to implement the Viterbi algorithm, and as of now I have something that doesn't produce error messages, but it also doesn't produce anything. As in, it returns None. This is not exactly useful. I'll go through and try to find where it stops working (if it was ever working in the first place) but I don't really expect to find it tonight. Hopefully tomorrow. Well, maybe I'll find it tonight, but I'm not sure that I'll fix it tonight. Anyway.

Another thing is, the one and some fraction implementations I've done are rather glaringly not recursive. At this point, I'm going with getting them to work, and then worrying about whether recursion would make more sense than iteration.

The problem has been chased to the backtracking to find out the path section. Too bad that was the section that made the least sense to me ... Oh well. I guess I'll just have to try to figure it out again.

Well, I think I got rid of the problem I was having yesterday, only to get a new one. The Viterbi function now works sometimes. I think it doesn't take into account the likelihood of the transitions and just chooses the highest probability state at each step, whether or not it could get to the already-decided next state from there. This is after flip-flopping indices and whatever for an hour and a half trying to make it come up with an answer that made even a little sense. And now to try to make it pay attention to transitions ...

Don't you love it when you stare for ages at a program that you think should be working, and then realize that one of your indices should have a 2 subtracted instead of a 1? That's what just happened. Viterbi works now.

I'm now trying to implement the backward algorithm as a necessary step before trying to put training in. I think the indices are all muddled up again and probably I should start over and think about it some more before just diving into working on it.

Well, now that I've been told how to make backwards loops in python, I've gotten it to run without spitting up an IndexError. However, it's still not giving the right values, and I don't have an example to check intermediate numbers on--I'm just comparing to the final answer for the forward algorithm. I should put times on these little paragraphs so that "now" means something. Back to fiddling with indices and stuff to hopefully get the right numbers ...

It finally works. I guess this means I have to try to figure out the training thing now ...

A description of HMM algorithmsI've been fighting with the training algorithm all day. I finally realized just before lunch that the reason that it wasn't making any sense was because I was looking at the version for continuous probabilities, and the models we've been working with have discrete probabilities. The version for discrete probabilities makes much more sense. However, it seems what I have implemented as of now makes the training sequence less likely, rather than more--I keep getting ZeroDivisionError on the second go through the loop. So I guess it doesn't make enough sense yet.

More attempts to understand and implement Baum-Welch. The current issue is that whatever I've done makes P(O|lambda) become increasingly less likely, rather than more likely as it is supposed to. I printed the code, and we've all got copies to look at now, so maybe someone will find something. I just don't know where the problem is, and I don't think I have any examples to be able to compare intermediate steps with.

This is getting nowhere. I think I need to find something else to do for the rest of the day, or at least for a while.

Judy found a bunch of places where I'd gotten the indexing mixed up, so now the training function ... well, it doesn't make the probabilities decrease anymore. I'm not certain that I am convinced that it really and truly totally works, though. It seems prone to eliminating some possible values/states completely if the first training sequence is short, and when I ran Viterbi on an HMM that had been trained on several long sequences, it returned arrays of zeros for each observation array that I tried inputting, including one of the training arrays. It's possible that that was what should have been happening, but it seems ... odd to me. It looks like tomorrow is going to involve going over the code and checking the changes (and anywhere else) to make sure that I really did want to make them, just in case.

Reading about chords. Theoretically trying to get Pd to play some of them, but there are only two output channels ... which seems to mean that if I try to make it play more than two notes at a time they sound awful. I've been trying to see if there are any objects that would help, although I don't know if I'm looking in the right places. nqpoly~ and nqpoly4 looked like they might be useful, but they don't seem to work outside of the help patches and I don't know why.

Read the articles about installations that Judy gave me last week. Looked up some stuff about them online. Links to various websites relating to those installations:

Tried using the sensor gloves as MIDI input to the Bayes Net patch. If one attempts to hold pressure on the sensors, rather than tapping and releasing, the sound is about as burbly as the audio input sometimes is. I then had difficulties getting the audio input to work ... I ended up taking out the vibrato and reattack messages, and it seems to work now. I am not sure why it stopped working in the first place.

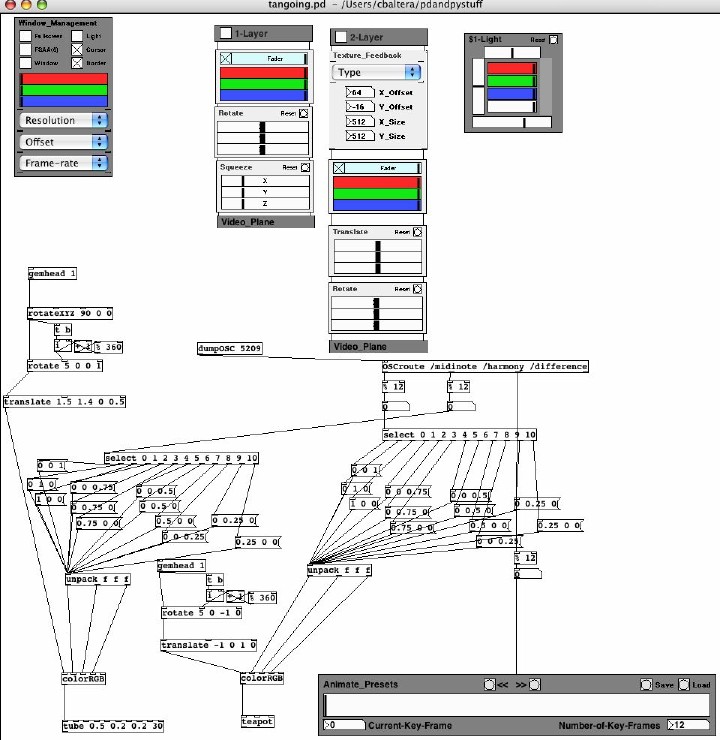

I probably should have put a screenshot of this up last week, but I didn't. The PixelTANGO and GEM patch that receives information from OSCsendchoices.pd and makes the teapot dance:

Started to work on the Laptop Project. Installed Pd successfully, started to program the patch. Unfortunately, it seems that adding or using py objects cause Pd to crash on that machine. Unknown whether it is an OS issue or a problem particular to that computer (it's running OS X 10.4.6).

More reading articles and stuff. Tried simultaneous input from the gloves and the keyboard, although not playing the keyboard while wearing the gloves. I should try that. Discussion of Hidden Markov Models and brainstorming for turning the lab into some sort of installation.

Links to articles and stuff:Playing with more gadgetry!

Spent the morning reading random links from Judy's useful links page. Spent the afternoon fighting with various gadgets and a very small computer (we thought it might be a replacement for the other laptop in the Laptop Project, but apparently we thought wrong). There also seems to be an epidemic of non-responding audio line-in ports in the lab, as we have three new microphones, one of which works with its USB attachment, none of which works through line-in (and we tried on two or three different computers). The USB attachment mic only inputs through one channel; it does function on OS X 10.3.9 and so does the Pd patch. The tiny computer does not seem to like the Pd patch although the USB attachment mic can input to it. Unfortunately, the USB input mic is a headset, and not really something we can set up for an installation.

I just plugged both of the other two mics into the USB attachment, and they both work ...

A bit more reading of random links. Made an HMM in Pd, although I am not sure whether it is going to be useable in any sort of useful way. We worked some on trying to hook up the projector, and didn't really get very far due to lack of cables--it didn't work via USB in the G5, and we didn't have a cable to hook into the adapter we do have. The PC recognized the existence of the projector over USB, but we didn't look far enough to see if it would project. It did project when it was hooked up in place of the monitor, but that is a bit counterproductive if anyone wants to use the computer itself.

Spent some time playing with the tablet ... Apparently gemtablet doesn't work under OS X, and the tablet doesn't work with hid with the drivers installed. So I uninstalled the drivers and tried the various example patches again. The thing is, the tablet pretty much just works like a mouse without the drivers, so I don't know that we gain anything by having a tablet to input instead of a mouse (gemmouse seems perfectly happy to take mouse-style input from the tablet with or without the drivers...).

Played with Gem and suchlike some more ... Made a patch with a teapot that zips around like crazy. Judy brought an s-video cable for the projector. It does indeed work with the G5 with that cable. We discussed the article about using HMMs for gesture tracking. In an effort to better understand what some of the objects described in the paper were doing, I used Pd and python to make a patch whose function is analogous to the function of the orient2d and code2d objects described in the article. It is not immediately obvious how to do recursion in Pd, if that is even possible.

I think the problem I'm having coming up with things to do comes from not really knowing what our exact goal is. Everything's just kind of floating off in some sea of abstract concepts somewhere ... I mean, it's nice that we're intending to make an installation, but I'm still unclear as to exactly how the HMMs and the whole music manipulation/reaction/whatever bit are going to fit in. I think I want to try programming some kind of HMM thing, but it would be a lot easier if I knew what it was representing as I made it. Maybe that's a sign that my code is being too tailored for specific uses, and I should just try to make a general one, with lots of options available ...

Well, I started making a general HMM in python, and I keep running into python's lack of structure. I think I'll bring my python book on Monday, so that I'll have somewhere to look things up.

More looking at gadgetry. Also investigating pixeltango, which oddly enough seems to work in Pd-extended, at least the help patches, but crashes it when you quit the pixeltango windows. Also, getting it to display text was rather complicated, involving copying a font to some subdirectory of the Pd.app folder, and renaming the font Empty. It seems like there ought to be a better way to do that. Looked at the demo patches and videos from this website's PixelTANGO Workshop. It also seems to have some other tutorial type stuff.

I am beginning to wonder if pixeltango is actually going to be of any use to us, as it seems to only be controlled by actually moving the sliders, rather than it being (at least obviously) possible to send signals from (for example) a sound input patch to change the positions of the sliders. However, it does show the sorts of things it is possible to do with Gem.

Well, one of the tutorial-type things on the site linked yesterday says that it is or is going to be possible to control pixelTANGO with MIDI or OSC. Went looking to see if there was any more information about this in the links at the end of the tutorial. The first one I looked at turned out to be more gadgetry.

It appears that it is not yet possible to control pixelTANGO by OSC or MIDI.

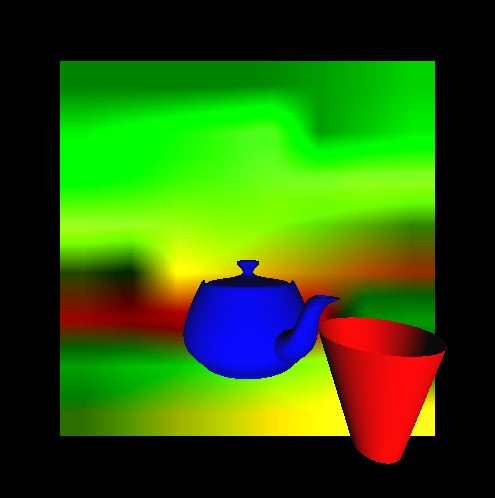

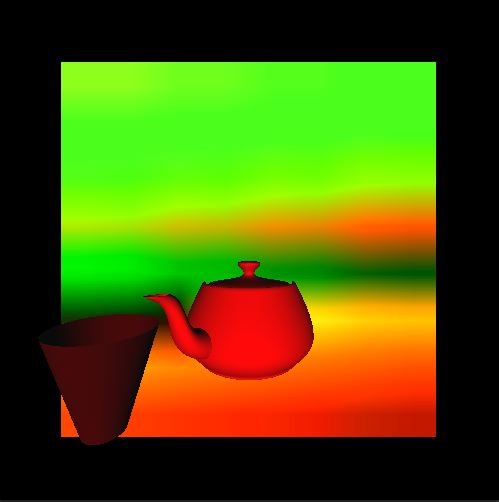

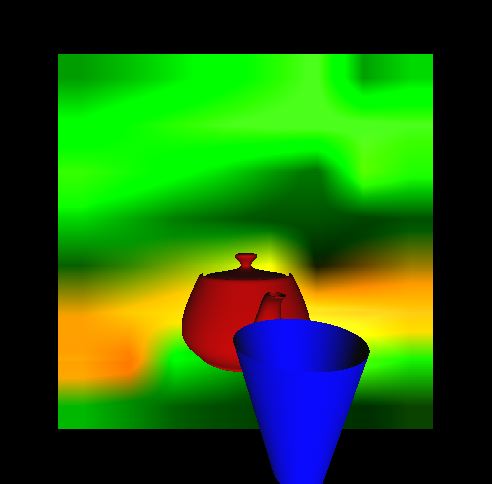

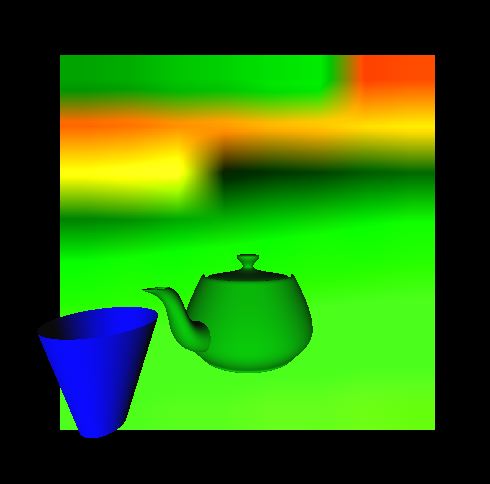

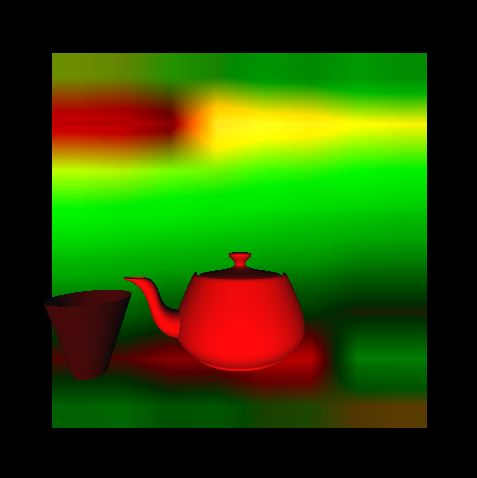

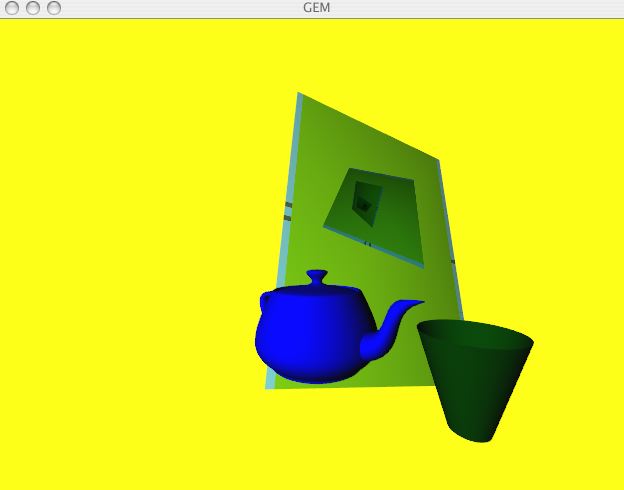

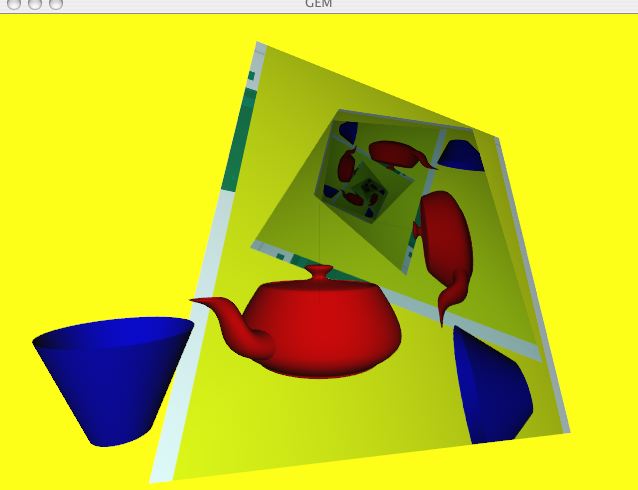

However, the pt.animate object can take a numerical input to indicate which frame to go to. I made a really big patch that is a lot less complicated than it looks, relying heavily on the help files, that receives information from the OSCsendchoices patch and displays a rotating teapot and cup, with various states of two pixelTANGO layers in the background. The color of the teapot depends on the input note, and the color of the cup depends on the harmony note; the "background" depends on the interval between them.

Discovery: It is possible to reflect GEM objects in a pixelTANGO feedback layer--objects with [gemhead 1] appear in 2-Layer feedback.

Played with the pixelTANGO patch a little bit. Read the HMM tutorial. Started playing with pmpd.

Started by pursuing Sara's discovery of how to integrate GEM objects and PixelTANGO--it kept crashing the computer she was using. However, this computer seems able to handle it. There is now a patch that can put a picture on a teapot. I think it's treating the teapot as a 3D-model in PixelTANGO, although it is possible that I would be getting the same results if I were using a video layer. I should probably check that on Monday.

We then started trying to update the chip in the I-Cube Digitizer. Getting the new chip in was not difficult; however, once the chip was in we had a lot of trouble getting the Digitizer to output and respond to sensors, etc., i.e. it wasn't working. We spent most of the day fiddling with wires and software and plugging it into two different MIDI/USB boxes and three different computers. Eventually the software got correctly installed on one of them ... Then we needed to figure out how to get the Digitizer hooked up to a synthesizer so that it would play notes in response to the sensor readings. The answer:

More fiddling with audio settings and such. I put recording capabilities into the audio key choice patch, since if you try to use audio input and have the computer play the output, rather than record it, it ends up harmonizing with itself instead of with you half the time. I wonder if this could be resolved somewhat by having the output go into headphones rather than speakers, so that the user can hear it but the microphone can't. Recorded a couple of not-so-burbly audio input recordings.

Adapted the Bayes net to "harmonize" a major scale rather than a minor pentatonic. I think I need to change the probabilities in some of the files, because it is currently making a lot of weird chords.

It's the half-steps that are causing the dissonance, because every so often it will play the notes in the scale that are a half-step apart together as "harmony", and it will just sound weird.

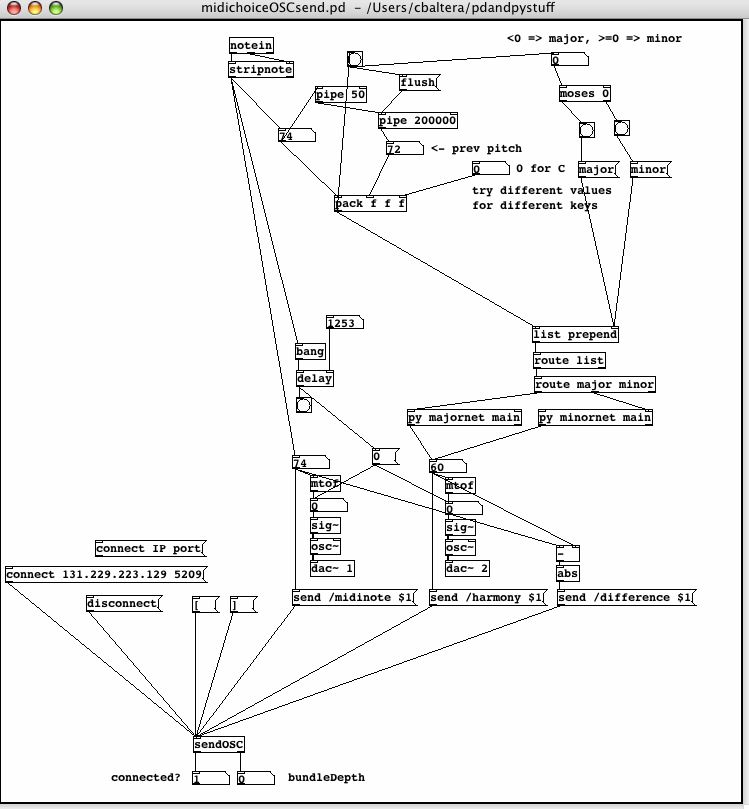

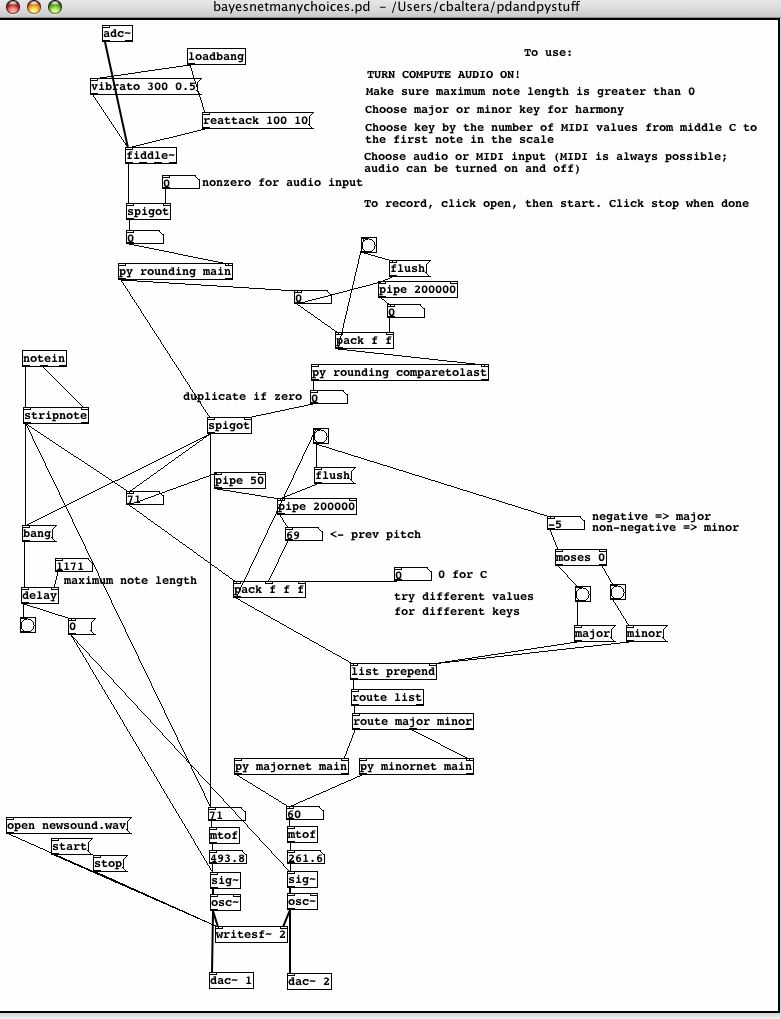

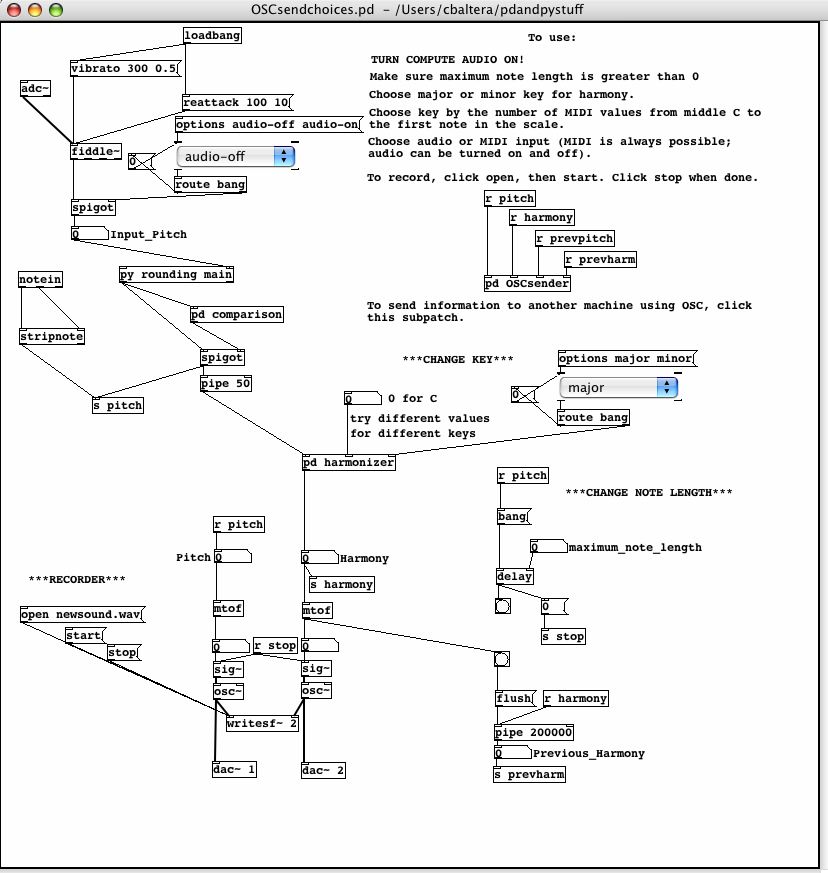

So the current situation is this: there are several versions which take MIDI input and seem to work successfully. These include two versions which take input and play harmony for C minor pentatonic, a similar version which adds the ability to record the input and the harmony, one which plays harmony for any minor pentatonic chosen by the user, and one which plays harmony for any major scale chosen by the user. Additionally there is a C minor version which delays the output of each harmony note before outputting it. There are also multiple audio input versions, that seem to work successfully except for ambiguities in the input sometimes resulting in a burbling effect. There are several C minor pentatonic versions, many deriving from attempts to bypass fiddle~ when that was thought to be necessary. The audio input versions generally parallel the MIDI input versions, so there are also audio versions allowing for a choice of minor pentatonic scales, recording, audible output, a choice of major scales, or a combination of the above. There are also a couple of old versions where I attempted to combine MIDI and audio input; I haven't really looked at those recently.

Played with the major scale harmony patches and made recordings. Most of these are from MIDI input.

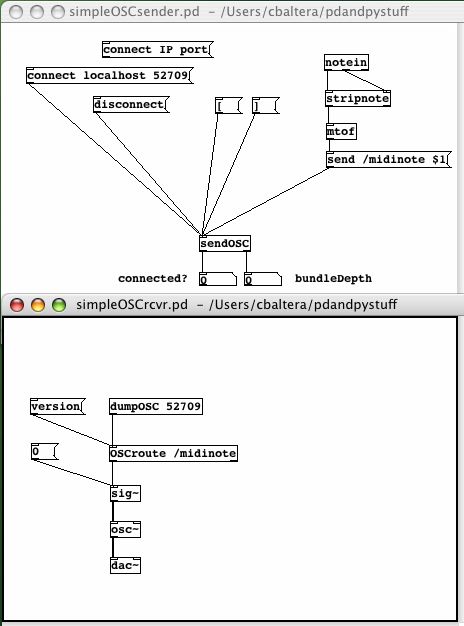

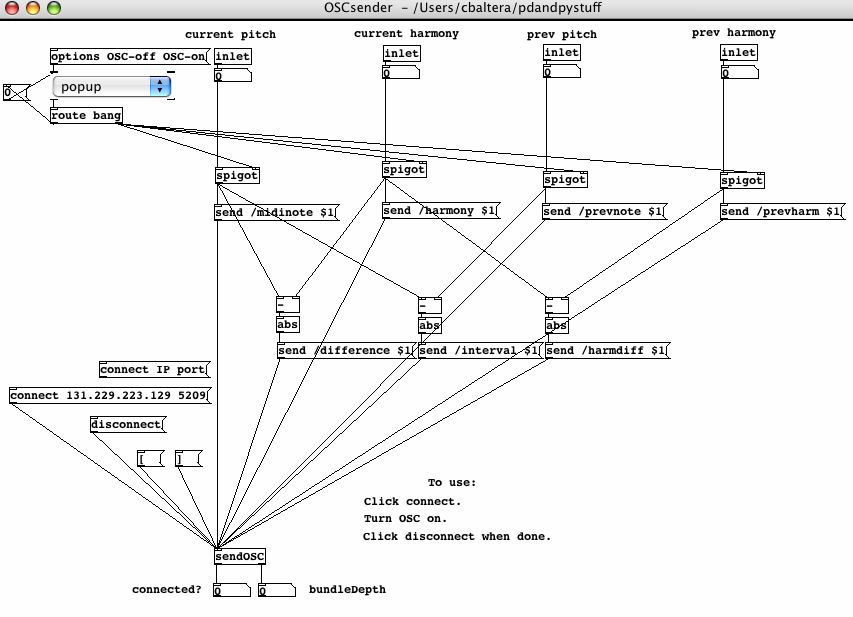

Started investigating Open Sound Control. It is included in the version of Pd that we are using, and the documentation in the Pd help browser was very informative about how to use it. I made a pair of send and receive patches, and had one computer play the notes that were being input through MIDI into the other computer.

Adapted the Bayes net for major scales to make one for minor scales. There are now versions of both audio and MIDI input that harmonize for minor scales chosen by the user.

Yesterday afternoon's discovery is teapot! Apparently one of the basic shapes which it is possible to draw using GEM is a teapot. I used OSC to send the MIDI numbers for the input and harmony notes to Sara's computer and she then used these numbers to change the color and location of the teapot. Thus, we have a teapot that does a little teapot dance as one plays on the keyboard. It also changes color, a lot.

This morning I went looking for the music to "I'm a Little Teapot," and ended up having to figure most of it out by ear, as it did not appear to be obviously available online. I added a third value to be sent through OSC (the absolute value of the difference between the input and the harmony).

I then made an audio input OSC patch to parallel the MIDI input patch. It occurred to me at some point that it might be nice to be able to switch between major and minor keys without having to go open a different patch, so I chased Pd conditionals around for a long time, and finally got something that worked. It wasn't so much the conditionals that were the problem this time, actually, as getting the messages into the correct format to use them. It didn't help that the patch for list prepend in help used append instead, confusing the issue. Once I got the conditionals working so that the user can choose major or minor harmony, I copied that into several other patches so that it would be available in patches without OSC.

And then I decided that the user should have lots of options:

I took the patch with lots of options and modified it to include OSC capabilities. Then I started moving parts of its functionality into subpatches to make the main patch less crowded and confusing. I also used the Pd send and receive objects to remove some of the longer patch cords and further de-clutter the patch. The subpatches are for OSC, computing the harmony notes, and making the comparisons to minimize audio-input burblings. I also put a bunch of comments on this patch and its subpatches to inform the user of how to use the patch.

Spent the morning mostly making aesthetic changes to the patch with all the user-controlled choices. I replaced some number boxes with popup menus for a more intuitive and understandable control system, and labeled some more parts of the patch.

Looking around the Internet for Pd-compatible gadgetry.

Started putting the Bayes net into python, and read a bunch of tutorial type things on Pd and Bayes nets. Investigated MIDI input into Pd. Was momentarily shocked by the lack of structure in python.

Seriously worked on bayesnet.py. Spent the morning putting in the summation steps, which I hadn't done on Monday. Ran into several errors.

The primary difficulty with this version is that the sound output by the program does not have any set ending point--it is necessary to turn "compute audio" off to make it stop playing whatever the last pair of notes is.

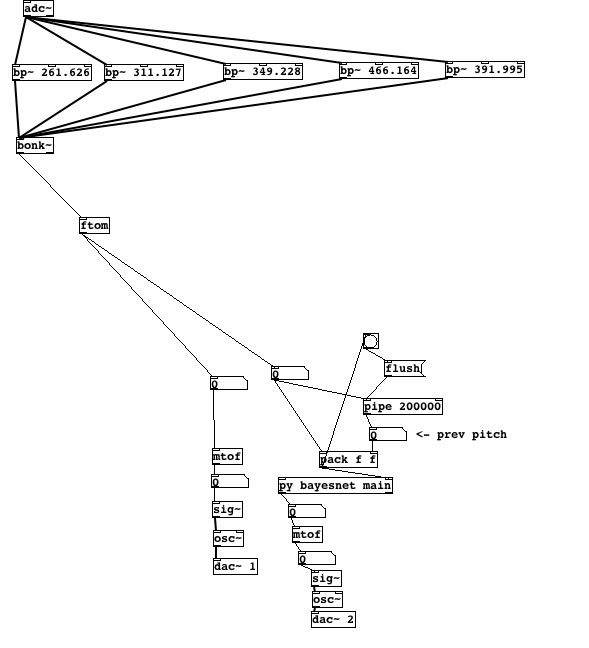

Started work on an audio input version:

The first image shows the two parts that needed to be connected by something that would determine the pitch of the incoming sound. The fiddle~ object seemed to do that; however, every time a patch containing fiddle~ was run, Pd crashed, and gave messages about a segmentation fault. Something online said that bandpass filters could be used as a lower-cost alternative to fiddle~; however, they did not seem to provide the pitch of the incoming sound.

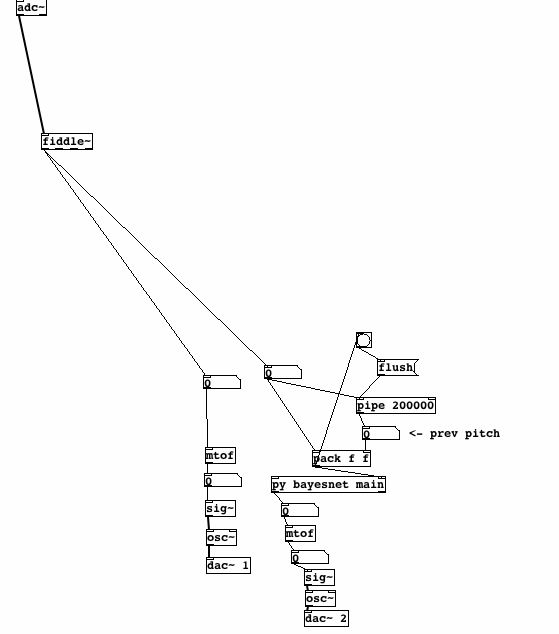

Started trying the audio input patch on the one other version of Pd I had available. Observed that fiddle~ crashed Pd-0.38-4-extended-RC1, which was the version which could run python functions; however, fiddle~ did not crash Pd-0.38-3. Wondered if I should go get another version of Pd. Wandering around on Pd websites uncovered this, so the fiddle~ crash thing is apparently an officially reported bug, even if no one seems to be doing anything about it.

Decided to actually get another version of Pd. An "extended" version was necessary to run python, so I went here and downloaded Pd-0.39.4-extended.dmg, the latest extended release. Fortunately, this version seems to be more recent than the other one I had, and does not crash with fiddle~.

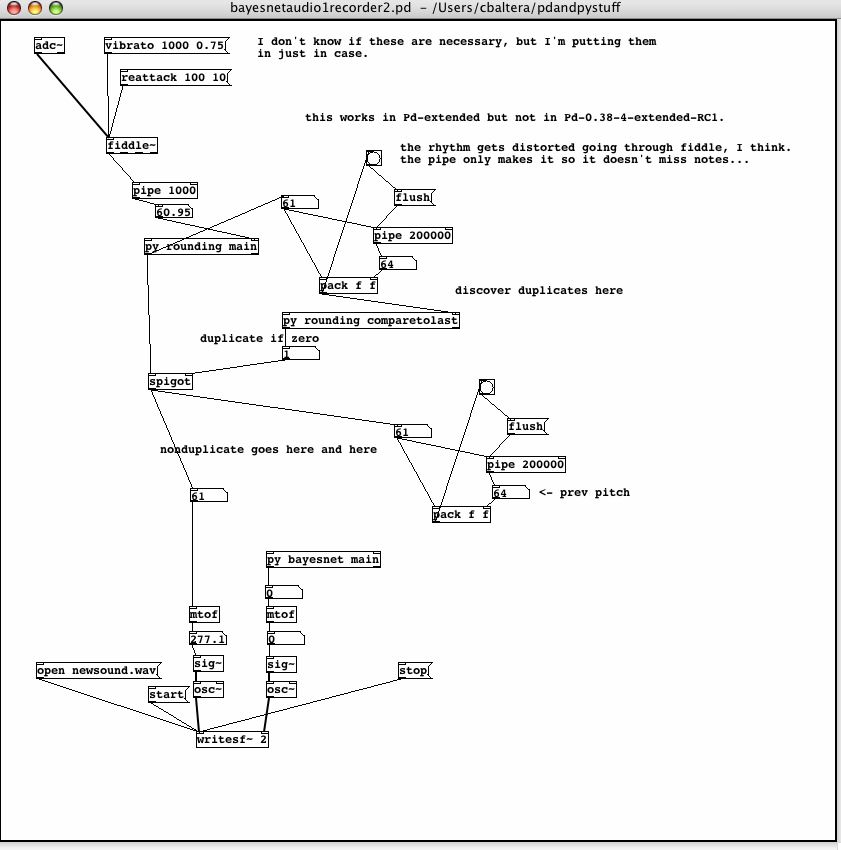

The current audio input version:

This version has the problem that it sort of burbles. It doesn't only think you've changed notes when you think you've changed notes, but also thinks you've changed notes a lot of times when you haven't. This is less pronounced when using an instrument than when singing, but still noticeable. I made several modified versions of this, which record the notes input and output rather than playing them. I actually thought I had gotten rid of the burbling problem before I left, but it was back again on Thursday morning

One thing that I was wondering is whether it might make just as much sense to run the Bayes net only once for the 25 possible pairs of notes, and then only perform the random number and probability calculations while the music is running. Perhaps there could be some way that the user input as the music continues could change the starting probabilities in the net, to add continued relevance to the repeated computations.

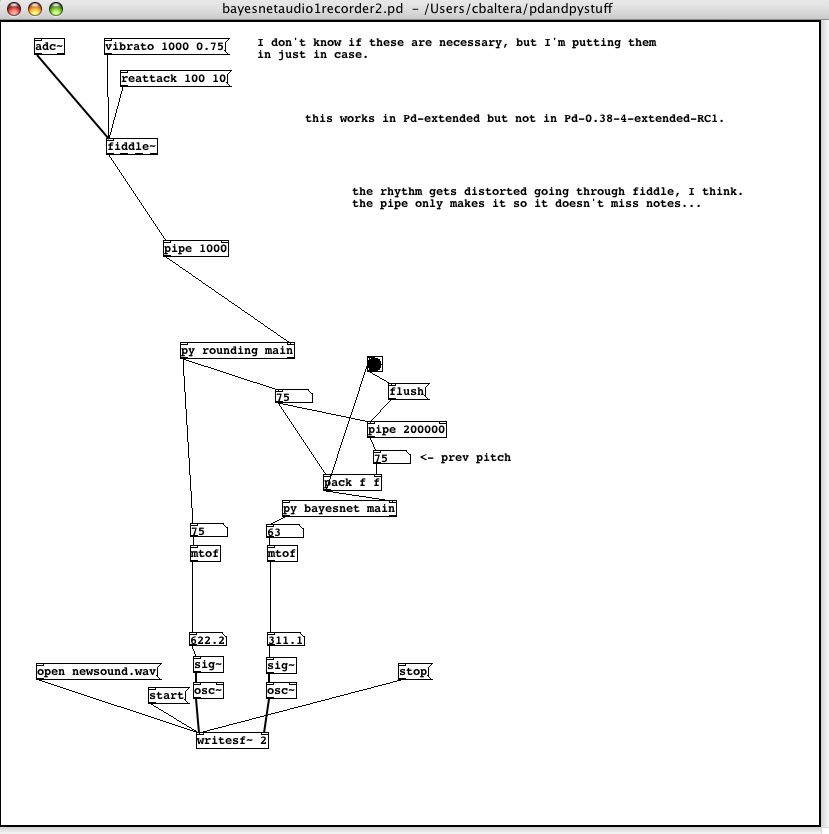

Soundfiles:Spent most of the morning fiddling with the audio recorder to try to get rid of the burbling. I think there may also be some rhythm distortion going on due to fiddle~'s computation time, but the burbling is currently more obnoxious. Of course, most of the burbling could be because I am not a trained singer and don't know how to stay on pitch properly? Maybe I'll practice singing.

The audio recorder:

Spent a couple of hours this afternoon putting this website up.

Interesting. The note is being resent, even with the rounding, even if it changes too little to change the rounded note. Burbling, I do believe I have found you!

I think what I need to do now is somehow ensure that repeated notes coming out of py rounding main do not get sent on to the rest of the patch. I am not sure how to do this right now, as the first couple of things I've tried have not worked. Will keep working on it.

Well, I think I have something to take out repeated rounded notes; however, it doesn't really fix the bubbling, just sort of makes it different.

I'm sort of tired of fighting with burbling noises, so I am going to see if I can put the ends of notes back into the midi versions. I'll fight with burbling noises again tomorrow.

Back to report that bayesnetmidi1.pd now cuts off notes longer than 2.5 seconds, and that this time can be adjusted as desired by the user. I will add this functionality to the midi recorder, but I don't think I will be able to make it work on the audio patches until I get rid of the burbling, as there are not really discrete note events to time yet.

It occurs to me, after playing a bunch of random songs on the keyboard with varying results in terms of how they sound, that it would not be that hard to make a basic adaptation of this for other keys, by taking the exact same Pd patch, and modifying the python code very slightly--just changing the number values for each note. Whether or not this would still produce reasonable sounding things is perhaps questionable, and the one that exists is still technically only supposed to apply to improvisation in the pentatonic scale C-Eb-F-G-Bb, but it assumes other notes are Bbs and overall sounds decent for songs in keys with two or three flats, not that my sample size for this is very large.

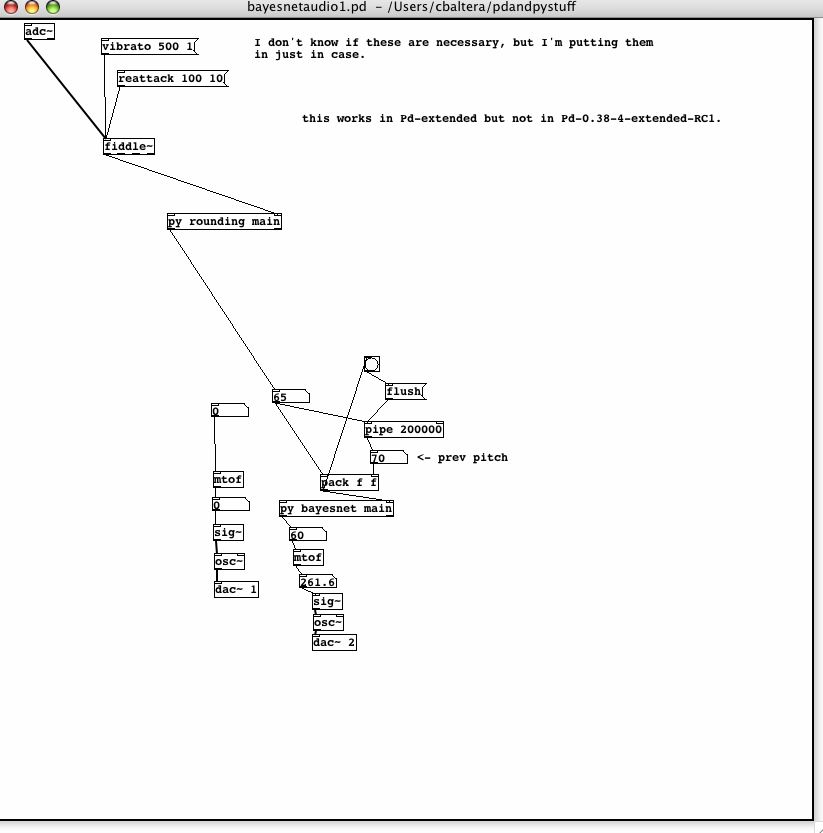

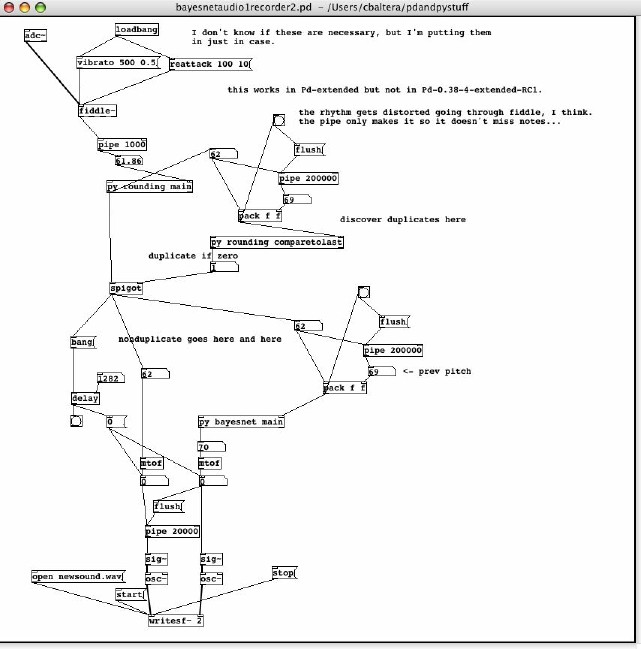

Realized that since the vibrato and reattack messages to fiddle~ are messages, they probably need to be banged for fiddle~ to pay any attention to them. Changed a few settings around, added the cut-off to the audio patches. There's a lot of lag between the audio input and the "harmony" output. I wonder if that's the source of part of the burbling problem. The current version of the audio recorder:

Here we go again. I thought I'd gotten it to stop missing notes in audio input, at least for the recorder one, but apparently not. Of course it could be that I am just singing on all the lines between notes, and it's getting confused ...

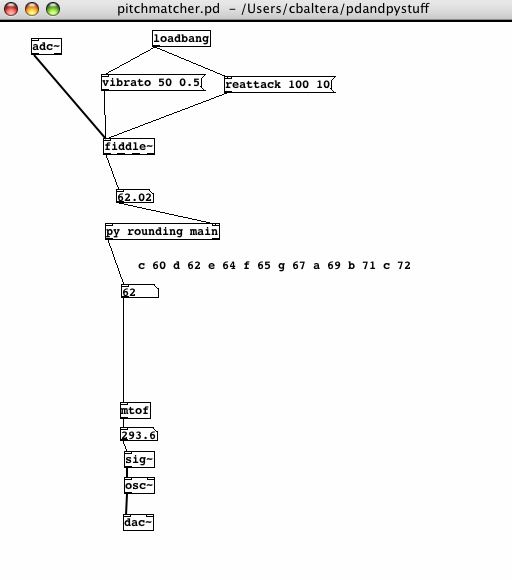

I've set up this patch that basically tells me what note I'm singing, and it's pretty depressing singing to it and finding that I really do hover around not in-tune notes. Also that it's really hard to sing exactly the MIDI pitch that a note is supposed to be--usually I'll be .3ish below, try to adjust and end up .2ish above. I suppose this is why I'm not majoring in vocal performance or something. The patch:

Admittedly this is not terribly well tested, but it seems that the audio recorder burbles less when there are breaks between notes than when there are not. I need to make something that will output the same string of notes when commanded, so that I can test this properly.

I just wandered off and made a variation of the MIDI input patch that can take input in various different keys. Well, only five different pentatonic scales right now, but I think I will figure some more pentatonic scales out and give it the ability to deal with input in any of them. Voila the screenshot:

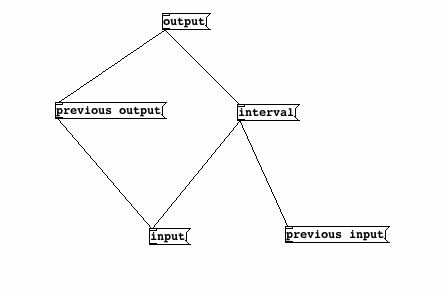

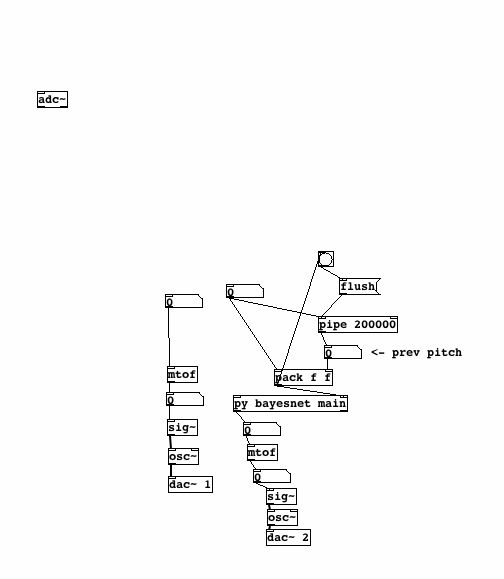

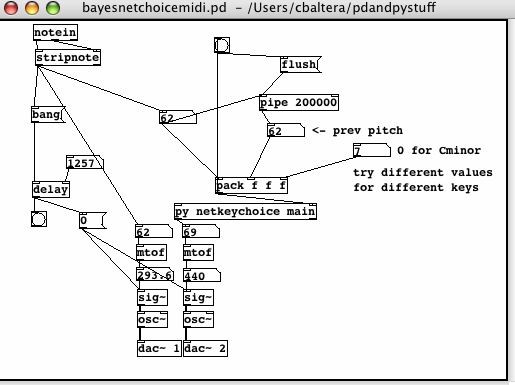

So I realized that the previous pitch was updating prematurely, as in at the same time the current pitch was, in the key choice patch. Apparently packing three numbers takes significantly more time than packing two numbers. After a bit of fiddling, I put a 50 millisecond pipe between the current pitch and the 200000 millisecond pipe, which delays the update of the previous pitch value sufficiently that the bang to flush the long pipe happens before the value in the pipe changes.